GSA SER Link Lists

Understanding the Power of GSA SER Link Lists

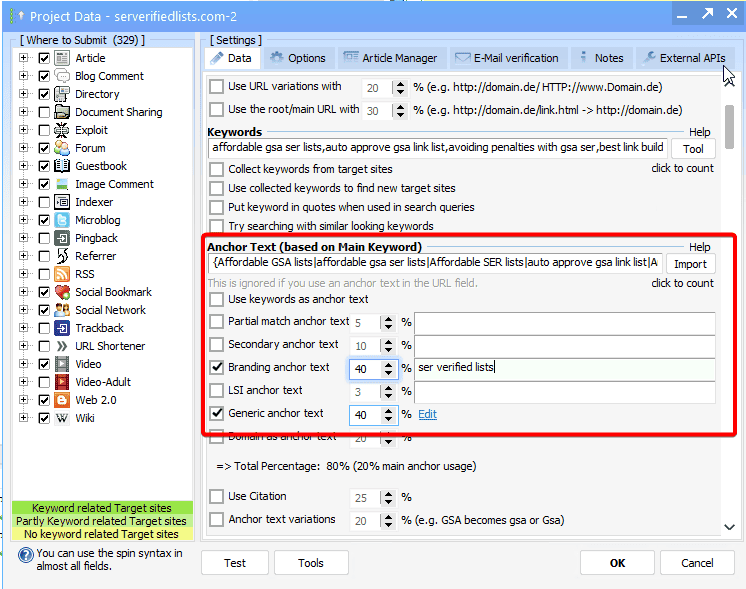

If you work in the world of search engine optimization, you’ve likely encountered the need to build backlinks at scale. One tool that consistently appears in this conversation is GSA Search Engine Ranker, and at the heart of any successful campaign lies a well-curated, high-quality source of targets. This is exactly where GSA SER link lists come into play. They are not just a convenience; they are the fuel that drives the entire engine.

What Exactly Are GSA SER Link Lists?

A GSA SER link list is essentially a collection of URLs, footprints, and platform identifiers that the software can parse and use to create accounts, post content, and ultimately generate backlinks. These lists come in various formats, often divided by platform type. You might find lists specifically for comment spam targets, guestbook pages, forum profiles, image comments, or even high-value platforms like expired web 2.0 properties. The software reads these lists and systematically attempts to register and place a link, automating a process that would take a human years to complete manually.

Why Pre-Made Lists Matter

When you first install GSA SER, it ships with some basic global site lists. However, the real magic happens when you import specialized, verified GSA SER link lists. The internet decays rapidly. Engines like Scrapebox or the built-in target URL scraper are excellent, but they often yield a massive number of dead URLs, platforms that have removed signup forms, or sites that simply no longer exist. A curated, recently verified list cuts through the noise. It minimizes the time the software wastes on dead endpoints and maximizes the verified links per minute, directly improving your submissions rate and success ratio.

Key Components of a High-Performing Link List

Not all lists are created equal. A raw scrape of a billion URLs sounds impressive, but performance depends entirely on quality. A strategic list contains pre-identified platform footprints. For example, it knows a URL belongs to a WordPress article with open comments rather than just a random static HTML page. The best GSA SER link lists often categorize targets by engine type. Separating guestbook engines from social network engines allows for hyper-focused template usage. You wouldn't send an article with a thousand words to a guestbook that only allows fifty characters. This segregation prevents wasted processing power and reduces the ban rate on the server side.

The Critical Role of Spintax and Context

A link list is only half the battle. To truly leverage a massive list without leaving a footprint, operators pair their targets with sophisticated spintax. However, the context of the list matters deeply. If you are using a list of high-end expired article directories, your content and anchor text must be remarkably different from what you would use on a generic public comment list. The surrounding topic of the target page influences the relevance of your link. Modern search algorithms look at link neighborhood context, so using a contextual GSA SER link list tailored to a specific niche can offer more resilience than blasting a general list, even if the general list contains more URLs.

Building and Verifying Your Own Resources

Relying solely on public GSA SER link lists is risky because the targets become saturated quickly. A URL that has been spammed by ten thousand users over a month will likely have the comment moderation set to maximum, or worse, the link section may be automatically tagged with the "nofollow" attribute by the webmaster’s security plugin. Advanced GSA users therefore develop a workflow of footprint mining and verification. They use custom scrape footprints to hunt for fresh platforms. Then, they run these scraped URLs through a dummy project in GSA SER on a test machine, filtering out any targets that fail the signup test. Only the nodes that survive this verification process make it into the final, high-power GSA SER link lists used for money sites or tier-1 properties.

Avoiding the Google Spam Trap

There is a common misconception that the volume of links is the primary metric. In the current landscape, velocity and toxicity are far more critical. A bad batch of links—links from sites flagged for malware or purely machine-generated gibberish—can quickly lead to manual penalties if pointed directly at a site you care about. Therefore, sophisticated practitioners use GSA SER link lists as part of a tiered linking structure. The raw, high-velocity lists are directed at tier-2 or tier-3 buffers. They then create a filtered, hand-crafted list of elite platforms, often obtained from private membership areas or competitor reverse-engineering, to point at their tier-1 properties. This layered approach sanitizes the link juice, ensuring that only trust and power flow upward toward the main asset.

Maintaining Velocity and Freshness

The half-life of a link list is shorter than most people think. A server that was online yesterday might be shut down today. A forum administrator might patch the software tomorrow, breaking the registration engine. To maintain consistent ranking signals, you cannot simply load a single file and forget about it. A smart SEO setup schedules recurring scrapes and regularly imports fresh GSA SER link lists. This cyclical refreshing process ensures that the software is always hitting active targets. Stagnant lists lead to a decaying number of live links, a pattern that advanced forensic link auditors can easily spot. Fresh, verified lists create a natural growth curve of live links that mimics organic viral success rather than a robotic spike and crash.

more info